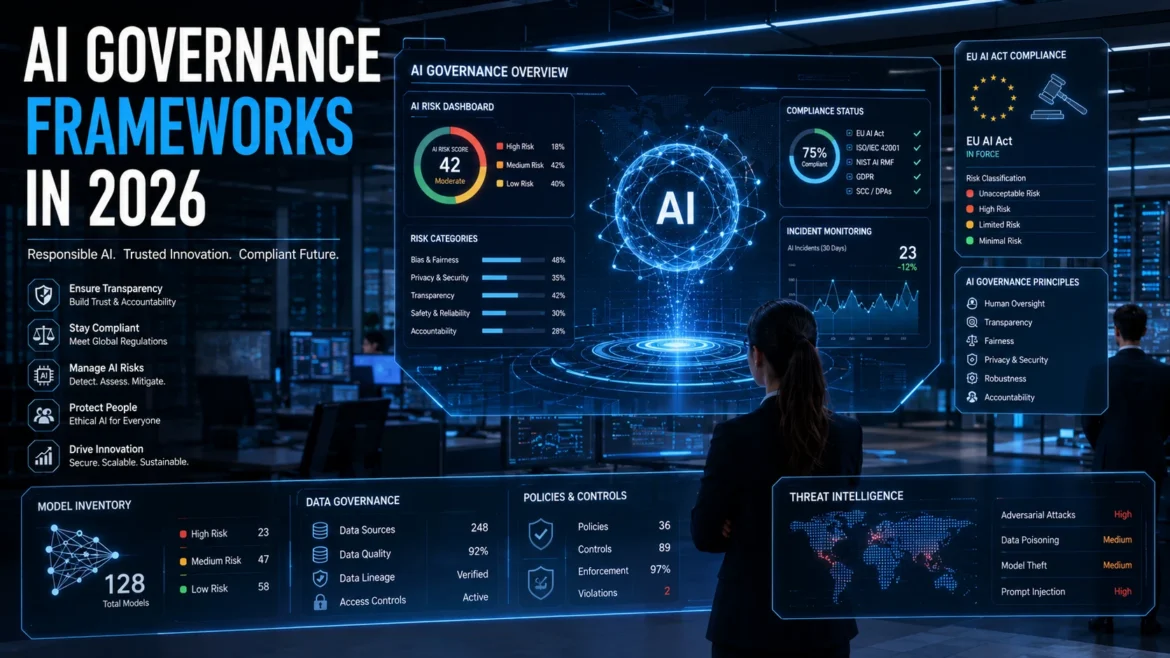

On August 2, 2026, the EU AI Act becomes fully applicable. It is the world’s first comprehensive legal framework for artificial intelligence, and it affects every company that deploys AI systems in or serving the European Union. That includes American, Asian, and global companies whose AI outputs reach EU users.

But the EU AI Act is not the only framework demanding attention. The NIST AI Risk Management Framework in the United States, ISO/IEC 42001 internationally, and country-specific regulations in the UK, Canada, China, and Singapore are creating a global patchwork of AI governance requirements.

For businesses, this is not a distant policy discussion. It is an operational reality that requires immediate action. This guide breaks down the major governance frameworks, explains what they require, and provides a practical compliance roadmap for organizations at any stage of AI adoption.

What Is AI Governance (and Why Does It Matter Now)?

AI governance is the system of policies, processes, and controls that ensure artificial intelligence systems operate ethically, transparently, and in compliance with applicable regulations. It covers the entire AI lifecycle: from data collection and model training through deployment, monitoring, and retirement.

Why does it matter now? Three converging forces are making AI governance urgent.

Regulatory enforcement is beginning. The EU AI Act’s provisions on prohibited practices and AI literacy have been enforceable since February 2025. Full high-risk AI system requirements take effect in August 2026. Fines for non-compliance reach up to 40 million euros or 7% of global annual turnover.

AI systems are making consequential decisions. Credit approvals, hiring recommendations, medical diagnoses, insurance pricing, and criminal justice assessments now involve AI. When these systems fail or discriminate, the harm is real and measurable.

Public trust depends on accountability. Consumers, employees, and investors increasingly expect organizations to demonstrate responsible AI use. Governance is not just a compliance exercise. It is a trust-building mechanism.

The EU AI Act: The World’s Most Comprehensive AI Law

Risk-Based Classification System

The EU AI Act organizes AI systems into four risk tiers. Your compliance obligations depend entirely on which tier your system falls into.

| Risk Level | Definition | Examples | Requirements |

| Unacceptable | AI that poses clear threats to fundamental rights | Social scoring by governments, real-time biometric surveillance in public spaces, manipulation of vulnerable groups | Banned entirely (enforceable since Feb 2025) |

| High Risk | AI used in critical areas affecting health, safety, or rights | Medical devices, hiring/recruitment AI, credit scoring, law enforcement, education assessment | Risk assessment, data governance, transparency, human oversight, accuracy testing, conformity assessment |

| Limited Risk | AI interacting with people or generating content | Chatbots, deepfake generators, emotion recognition systems | Transparency obligations: users must be informed they are interacting with AI |

| Minimal Risk | AI with negligible risk | Spam filters, AI in video games, inventory management | No specific obligations (voluntary codes of conduct encouraged) |

Key Compliance Deadlines

February 2, 2025 (already passed): Prohibited AI practices ban and AI literacy requirements for all staff interacting with AI systems.

August 2, 2025 (already passed): Rules for general-purpose AI (GPAI) models and governance structure establishment.

August 2, 2026: Full applicability of all provisions, including high-risk AI system requirements, conformity assessments, and enforcement mechanisms.

August 2, 2027: Requirements for high-risk AI systems that are regulated products under existing EU safety legislation.

Who Must Comply?

The EU AI Act applies to providers (developers) and deployers (users) of AI systems, regardless of where they are headquartered. If your AI system is placed on the EU market or its output is used within the EU, you must comply.

This extraterritorial reach means U.S., UK, and Asian companies serving EU customers face the same obligations as EU-based companies. The GDPR model of global applicability applies here, too.

Penalties for Non-Compliance

The penalties are structured by violation severity. Using prohibited AI practices can result in fines up to 40 million euros or 7% of worldwide annual turnover (whichever is higher). Non-compliance with data governance and transparency requirements carries fines up to 20 million euros or 4% of worldwide turnover. Providing incorrect information to authorities carries fines up to 10 million euros or 1% of turnover.

NIST AI Risk Management Framework (U.S.)

The United States has not passed comprehensive federal AI legislation comparable to the EU AI Act. Instead, the NIST AI Risk Management Framework (AI RMF) provides a voluntary but widely adopted governance standard.

The NIST AI RMF organizes AI governance around four core functions.

Govern: Establish organizational policies, roles, and processes for AI risk management. Define accountability structures and decision-making authority for AI deployment.

Map: Identify and catalog AI systems across the organization. Understand the context, stakeholders, and potential impacts of each system.

Measure: Assess and quantify AI risks, including bias, accuracy, security, and privacy. Use both automated testing and human evaluation.

Manage: Implement controls to mitigate identified risks. Monitor AI systems in production. Maintain incident response and remediation processes.

While the NIST framework is voluntary at the federal level, several U.S. states (notably Colorado and Illinois) have passed AI-specific legislation that draws heavily from NIST guidance. Federal agencies increasingly reference the AI RMF in procurement requirements.

As organizations strengthen AI governance programs, securing machine learning systems against adversarial threats like data poisoning is becoming a critical compliance priority.

ISO/IEC 42001: The International Standard

ISO/IEC 42001 is the international standard for AI management systems. Published in December 2023, it provides a certification-ready framework that organizations can implement and audit against.

For multinational companies, ISO 42001 offers a practical advantage: it provides a single governance framework that maps to multiple regulatory requirements. An organization certified under ISO 42001 has a strong foundation for EU AI Act compliance, NIST alignment, and other national frameworks.

The standard covers AI policy, risk assessment, impact analysis, data management, model governance, human oversight, incident management, and continuous improvement.

Global AI Governance Landscape

| Region | Primary Framework | Approach | Enforcement Status |

| European Union | EU AI Act | Comprehensive, risk-based, mandatory | Partially active; full enforcement Aug 2026 |

| United States | NIST AI RMF + state laws | Sector-specific, mostly voluntary federal | Federal voluntary; state laws active |

| United Kingdom | Pro-Innovation AI framework | Sector-specific, principles-based | Non-statutory guidance; regulators applying |

| China | Multiple targeted regulations | Algorithm-specific, content-focused | Active enforcement |

| Canada | AIDA (proposed) | Risk-based, similar to EU approach | Pending legislative approval |

| Singapore | AI Verify + Model AI Gov Framework | Self-assessment, industry-led | Voluntary with industry adoption |

Building Your AI Governance Program: A Practical Roadmap

Phase 1: AI Inventory and Risk Classification (Months 1 to 3)

Catalog every AI system your organization develops, deploys, or procures. For each system, document its purpose, the data it processes, the decisions it influences, and the populations it affects.

Classify each system against the EU AI Act risk tiers. Even if you do not operate in the EU today, this classification exercise identifies your highest-risk systems and priorities. Use the EU’s AI Act Compliance Checker tool as a starting reference.

Phase 2: Governance Structure and Policies (Months 2 to 6)

Establish an AI governance committee with representation from legal, compliance, engineering, data science, ethics, and business leadership. Single-function ownership of AI governance consistently fails because AI risks span technical, legal, and operational boundaries.

Draft and adopt core governance policies: AI acceptable use policy, risk assessment methodology, data governance for AI training data, human oversight requirements, transparency and disclosure standards, and incident response for AI failures.

Phase 3: Technical Controls and Testing (Months 4 to 9)

Implement the technical requirements that governance policies demand. For high-risk systems, this includes bias testing and fairness audits, accuracy and performance benchmarking, robustness testing against adversarial inputs, data quality and lineage documentation, and human-in-the-loop mechanisms for consequential decisions.

Build or adopt automated testing pipelines that run these assessments continuously, not just at deployment.

Phase 4: Documentation and Transparency (Months 6 to 12)

The EU AI Act requires extensive documentation for high-risk systems: technical documentation describing the system’s design, capabilities, and limitations; instructions for use that enable deployers to understand and oversee the system; records of testing results, risk assessments, and conformity procedures; and transparency measures informing users they are interacting with AI.

Build these documentation processes into your development lifecycle so they are maintained continuously, not retroactively assembled for audits.

Phase 5: Monitoring, Audit, and Continuous Improvement (Ongoing)

AI governance is not a one-time project. It requires ongoing monitoring of deployed systems for performance drift, bias emergence, and accuracy degradation.

Schedule annual governance audits. Track regulatory developments (the EU is expected to release guidance and amendments as enforcement begins). Participate in AI regulatory sandboxes where available, as each EU member state must establish at least one sandbox by August 2026.

Expert Tips for AI Governance

- Start governance before full regulation hits. Organizations that wait until August 2026 to begin compliance will face rushed, expensive, and incomplete implementations. The companies that started in 2024 and 2025 will have mature, tested governance programs when enforcement begins.

- Use ISO 42001 as your baseline. For multinational organizations, ISO 42001 provides the most efficient governance foundation. It maps to EU AI Act requirements, aligns with NIST AI RMF, and provides audit-ready documentation structures.

- Invest in AI literacy across the organization. The EU AI Act requires AI literacy for all staff who interact with AI systems. This is not optional. Build training programs that cover basic AI concepts, governance policies, and role-specific responsibilities.

- Treat AI governance as a business enabler. Governance is not just a compliance cost. It is a competitive differentiator. Organizations with mature governance programs deploy AI faster (because risk assessment processes catch problems early), with fewer incidents, and with greater stakeholder trust.

- Plan for regulatory convergence. The EU AI Act is the first comprehensive AI law, not the last. Canada, Brazil, and other jurisdictions are developing similar frameworks. Building governance infrastructure that is flexible enough to accommodate evolving requirements saves high future costs.

Common AI Governance Mistakes

Treating governance as a legal department responsibility only. AI governance spans engineering, data science, ethics, compliance, and business operations. Legal can lead coordination, but governance fails without cross-functional ownership.

Focusing on documentation over operationalization. Creating governance policies that sit in binders (or SharePoint folders) without being embedded into development and deployment workflows. Governance must be operational, not theoretical.

Underestimating the scope of “AI systems.” Many organizations discover during inventory that they use far more AI systems than expected. Spreadsheet macros with ML models, third-party tools with AI features, and automated decision systems in procurement all fall under governance requirements.

Ignoring third-party AI risk. Your governance program must cover AI systems you procure, not just those you build. If a vendor’s AI system creates harm while deployed in your operations, you share the regulatory liability.

Frequently Asked Questions

What is AI governance and why does it matter?

AI governance is the system of policies, processes, and controls that ensure AI systems operate ethically, transparently, and in compliance with regulations. It matters because AI systems increasingly make consequential decisions (credit, hiring, healthcare), regulators are enforcing compliance (EU AI Act fines reach 7% of global turnover), and public trust requires demonstrated accountability.

Does the EU AI Act apply to companies outside Europe?

Yes. The EU AI Act applies to any organization that places an AI system on the EU market or whose AI system’s output is used within the EU. This extraterritorial reach means U.S., UK, and Asian companies serving EU customers must comply with the same obligations as EU-based companies.

What happens if my company does not comply with the EU AI Act?

Penalties are tiered by violation severity: up to 40 million euros or 7% of global annual turnover for prohibited practices, up to 20 million euros or 4% for data governance and transparency violations, and up to 10 million euros or 1% for providing incorrect information to authorities. Enforcement begins in August 2026 with full applicability of all provisions.

Your Next Step

AI governance is no longer a forward-looking initiative. It is a current-year operational requirement. The EU AI Act enforcement deadline is months away. NIST guidance is actively referenced in U.S. federal and state requirements. ISO 42001 certifications are becoming procurement prerequisites.

Start with an AI system inventory. Identify what you have, classify the risk levels, and assess the gaps between your current practices and the governance requirements that apply to your business. That inventory is the foundation on which everything else builds on.

The organizations that treat governance as an opportunity rather than a burden will deploy AI faster, with greater trust, and with significantly less regulatory risk.

Want to grow your authority in AI, cybersecurity, or enterprise technology? Publish high-quality guest posts through WritoryBuzz on trusted business and technology websites to improve SEO rankings, brand credibility, and industry visibility faster.